Most businesses track review metrics inconsistently, focusing on whichever number happens to catch their attention that week. This ad-hoc approach produces data without insight, numbers without context, and activity without accountability. A structured measurement framework transforms reviews from a vanity exercise into a strategic asset that informs operational decisions and drives predictable growth.

The challenge lies not in gathering data but in identifying which metrics actually matter. Review platforms provide dozens of statistics, many of which reflect activity rather than impact. The difference between measuring everything and measuring what matters determines whether reputation management becomes a strategic function or remains an administrative task that consumes time without producing clarity.

Why most review metrics fail to inform decisions

The problem with typical review tracking is not that businesses measure too little but that they measure the wrong things, or measure the right things without connecting them to outcomes. A business might celebrate reaching 100 reviews without considering whether those reviews drove enquiries, influenced conversion rates, or improved customer retention. The number becomes the goal rather than a signal of underlying performance.

Vanity metrics create this trap. They show movement without revealing whether that movement creates value. Total review count is a vanity metric if it exists in isolation. So is average rating when examined without context about changes over time, comparison to competitors, or correlation with revenue trends. These numbers feel meaningful but do not inform action because they lack the strategic framing that connects measurement to business outcomes.

The most important measurement question is not "what are the numbers?" but "what do the numbers tell us to do?"

Effective measurement requires selecting metrics that reveal how your reputation influences customer behaviour and business results. This means tracking indicators that change in response to your efforts, that can be compared meaningfully across time periods, and that connect directly to enquiries, conversions, or retention. Without these connections, measurement becomes observation rather than analysis.

The core metrics that actually matter

A practical review measurement framework focuses on six core metrics that balance activity indicators with outcome measures. Each serves a specific purpose in understanding whether your reputation strategy is working and where adjustments are needed.

Six essential review metrics

- Review volume — the total number of reviews on your Google Business Profile. Indicates visibility, social proof strength, and the scale of your feedback collection efforts.

- Review velocity — the rate at which new reviews are added over time. Reveals whether your review generation process operates consistently or produces sporadic results.

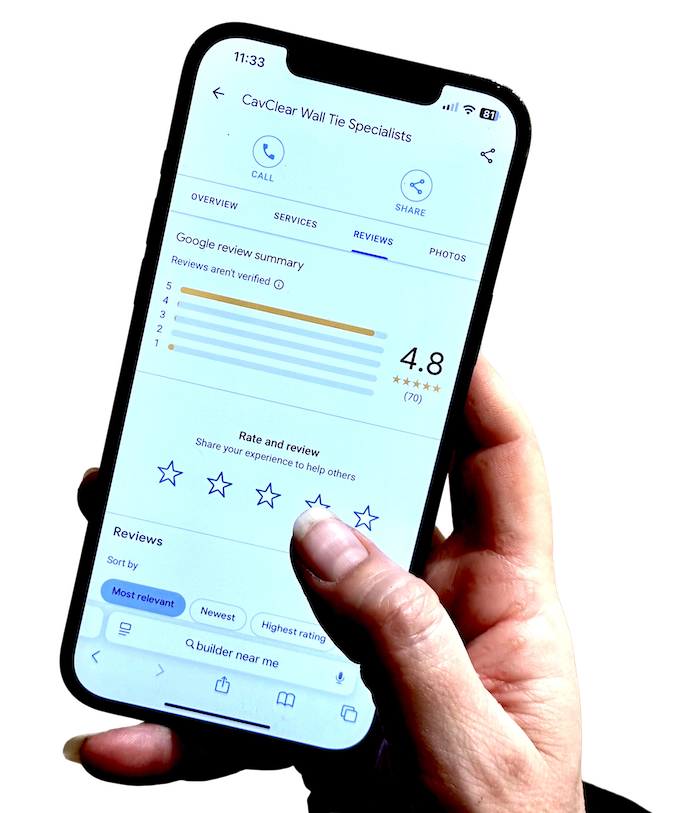

- Average rating — your overall star rating. Directly influences click-through rates, conversion likelihood, and local search visibility.

- Response rate — the percentage of reviews that receive a reply from your business. Demonstrates engagement and customer care to both reviewers and prospective customers reading the profile.

- Sentiment trends — the proportion of positive, neutral, and negative reviews over time. Identifies shifts in customer perception and highlights emerging service issues before they become patterns.

- Review-to-enquiry correlation — the relationship between periods of higher review activity and inbound enquiry volume. Links reputation directly to commercial outcomes.

These six metrics work together to provide a complete picture. Volume and velocity indicate whether you are generating feedback consistently. Average rating and sentiment reveal how customers perceive your business. Response rate shows how actively you engage with that feedback. The review-to-enquiry correlation demonstrates commercial impact. Tracking all six creates accountability across both activity and outcomes.

How to measure review velocity and why it matters

Review velocity is often overlooked in favour of total count, yet it provides more actionable insight. Velocity measures how many new reviews you receive per week or month, revealing whether your feedback collection process operates reliably or produces irregular results. A business receiving two reviews one month, ten the next, and none the month after has a velocity problem even if total volume eventually accumulates.

Consistent velocity matters because Google's local search algorithm weights review recency meaningfully. A steady flow of recent feedback improves local visibility more effectively than sporadic bursts, because recency is assessed continuously rather than at a fixed point in time. Velocity also signals operational health — businesses with a structured review generation process maintain predictable velocity, while those relying on ad-hoc requests see erratic patterns that undermine both visibility and credibility.

Review velocity

Review velocity is calculated by dividing the number of new reviews received during a period by the number of weeks or months in that period. For example, 12 reviews in three months equals a velocity of four reviews per month. Tracking this monthly allows you to identify trends, assess the impact of process changes, and set realistic targets for sustained growth. A business maintaining a velocity of four to six reviews per month will typically outperform a competitor with higher total volume but a velocity that has stalled.

When velocity fluctuates significantly, it often indicates that requests depend on manual effort rather than a consistent process. Stabilising velocity requires embedding review generation into your operational rhythm so that feedback collection occurs reliably regardless of team workload or competing priorities.

What your Google rating actually means — understanding the thresholds

Average rating is the most visible review metric, but its significance varies considerably depending on where you sit on the scale. Understanding what different rating thresholds mean in practice — for search visibility, for conversion likelihood, and for customer perception — helps you set meaningful targets rather than simply aiming for "higher."

Google rating thresholds and what they signal

- Below 4.0 — a rating below 4.0 is a significant conversion barrier for most categories. Research on consumer behaviour consistently shows that prospective customers apply a mental filter at this threshold, particularly for services involving trust or access to their home. Improving from below 4.0 to above it typically has the largest single impact on conversion of any rating improvement.

- 4.0 to 4.3 — in this range you are considered credible but not distinguished. Customers will enquire, but may still compare alternatives. The priority at this level is both improving the rating and increasing volume — a 4.2 with 8 reviews is far more vulnerable than a 4.2 with 80 reviews.

- 4.3 to 4.7 — this range is where most well-regarded local businesses operate. A rating in this band combined with consistent recent reviews and a high response rate is typically sufficient to convert customers who are actively searching. Volume and recency matter more than marginal rating improvement at this level.

- 4.8 and above — an outstanding rating that carries genuine credibility, provided the volume of reviews is substantial. A 4.9 based on 11 reviews invites scepticism. A 4.8 based on 120 reviews is a strong competitive differentiator.

The practical implication is that your rating target should be informed by where you currently sit and what move would have the greatest impact. Moving from 3.8 to 4.1 is likely to produce a more material improvement in enquiry volume than moving from 4.5 to 4.7. Prioritise accordingly.

Connecting sentiment analysis to operational improvement

Sentiment analysis moves beyond star ratings to examine the language and themes within reviews. While average rating tells you whether customers are generally satisfied, sentiment analysis reveals why they feel that way — and what specific aspects of your service are driving positive or negative responses. This granularity makes sentiment one of the most strategically valuable metrics available.

Identifying sentiment patterns requires reading reviews with a categorisation lens rather than responding to each individually. Positive reviews might consistently mention staff helpfulness, speed of service, or attention to detail. Negative reviews might repeatedly reference wait times, unclear pricing, or difficulty finding the premises. These patterns provide operational intelligence that star ratings alone cannot deliver.

A restaurant — sentiment analysis driving a measurable operational change

A restaurant reviewed six months of feedback and identified that a significant proportion of negative reviews mentioned difficulty securing tables during peak periods. This pattern prompted them to restructure their booking process and add capacity for walk-ins on quieter nights. Within three months, booking-related complaints had largely disappeared from incoming reviews, and overall rating improved. The improvement was not driven by asking for more reviews — it was driven by fixing the thing customers kept mentioning.

Sentiment analysis becomes genuinely useful when findings are routed to the people who can act on them. If multiple reviews mention product quality issues, that information belongs with the person responsible for procurement or service delivery. If pricing confusion appears frequently, it signals a need for clearer communication at the point of sale. Without this translation from observation to action, sentiment analysis remains descriptive rather than operational.

Why response rate influences both reviewers and prospective customers

Response rate measures what percentage of reviews receive a reply from your business. While it might appear to be a simple engagement metric, it significantly influences how both existing reviewers and prospective customers perceive your business. High response rates signal attentiveness and care; low rates suggest indifference, regardless of how strong the rest of the profile looks.

Prospective customers reading your reviews assess not just what reviewers say but how you engage with that feedback. A thoughtful response to a critical review often builds more confidence than a string of unanswered praise, because it demonstrates that the business takes feedback seriously and will handle problems if they arise.

Aim for a response rate of 80 percent or above — and prioritise negative reviews first

Responding to every positive review with a brief, genuine acknowledgement is good practice. Responding to every negative review promptly and professionally is essential. A profile where the business has responded to criticism thoughtfully is a materially stronger commercial asset than one where complaints sit unanswered — regardless of how many five-star reviews surround them.

Linking review metrics to enquiry volume and business outcomes

The ultimate test of any metric is whether it connects to business outcomes. Reviews exist not for their own sake but because they influence customer acquisition, conversion, and retention. Measuring this influence transforms review management from a marketing activity into a commercial function with observable return.

The most direct connection involves tracking enquiry volume alongside review activity. When review velocity increases, do enquiries rise? When average rating improves, does conversion follow? These correlations reveal whether reputation improvements translate into commercial results or remain isolated from the buying process. Without this linkage, you cannot assess whether investment in review generation is actually working.

Implementing a review measurement process

- Establish baseline metrics — record your current review volume, velocity, average rating, response rate, sentiment distribution, and monthly enquiry count. This is your starting point for all future comparison.

- Decide on a tracking cadence — monthly is right for most local businesses. It provides sufficient granularity to identify trends without creating administrative burden.

- Create a simple tracking record — a spreadsheet with columns for each metric, period-over-period change, and notes about significant operational changes or events that might explain movements.

- Review trends quarterly — every three months, look across all metrics. Which are improving? Which are static? Are there correlations between review activity and enquiry volume that warrant investigation?

- Connect findings to operational decisions — if velocity is declining, examine your review request process. If sentiment is shifting, look for corresponding operational changes. Metrics should inform action, not populate reports that no one reads.

- Adjust your approach based on results — measurement only produces value when it changes what you do. Build a habit of asking "what should we do differently?" after every quarterly review.

A template for monthly review reporting

Consistent reporting keeps reputation performance visible and integrated into operational planning. A simple monthly template creates accountability and makes trends immediately apparent without requiring significant time to compile.

Monthly review report — suggested structure

- Reporting period — month and year

- Review volume — total reviews this month, comparison to previous month and same month last year

- Review velocity — new reviews per week, trend direction (increasing / stable / declining)

- Average rating — current overall rating, change from previous month

- Response rate — percentage of reviews responded to, comparison to 80% target

- Sentiment breakdown — proportion positive / neutral / negative, notable recurring themes

- Enquiry correlation — total enquiries this month, any observable connection to review activity

- Actions taken — specific operational or process changes made in response to feedback patterns

- Next month priorities — one to three targeted improvements for the following period

The key is consistency — using the same format each month makes trends immediately visible and comparisons meaningful. A report that changes structure every quarter makes it impossible to identify whether performance is genuinely improving or simply being measured differently.

Avoiding common measurement mistakes

Even businesses that track metrics regularly fall into predictable traps that undermine the value of measurement. Recognising these makes it easier to build a practice that produces insight rather than simply generating numbers.

The first mistake is tracking too many metrics without prioritising the ones that drive decisions. When a report contains 20 data points, none receives adequate attention. Start with the six core metrics, then add additional tracking only when those six are consistently reviewed and acted upon.

The second mistake is comparing yourself only to competitors rather than to your own past performance. Competitor benchmarking has value, but your primary reference should be period-over-period improvement. A business with a 4.3 rating improving from 4.1 is performing better than one maintaining 4.5 without growth, even though the absolute number is lower.

The third mistake is treating metrics as endpoints rather than prompts. Measurement without action is observation. Each metric should trigger a response when it moves outside expected ranges. Declining velocity should prompt a review of your request process. A falling response rate should initiate a conversation about whether the right person is handling incoming reviews. Metrics exist to inform decisions, not to populate reports that no one reads.

Turning measurement into strategic advantage

The businesses that extract the most value from review measurement treat metrics as questions rather than answers. Numbers reveal where to look; investigation reveals what to do. This distinction separates businesses that measure for compliance from those that measure for improvement.

When review velocity drops, the metric itself does not explain why. It prompts you to look at whether your request process has drifted from its original consistency, whether customer journey touchpoints have changed, or whether seasonal patterns are at play. The metric creates the question; operational knowledge and customer feedback provide the answer.

When sentiment shifts negative, the metric identifies the pattern but not the cause. You must examine which specific themes are appearing more frequently, whether they correlate with recent operational changes, and whether similar patterns are appearing across different customer segments. The metric directs attention; investigation provides the context that turns data into understanding and understanding into action.

Want to See What a Managed Review Process Measures and Reports?

Trusted Reviews 4U builds your personalised review page and manages the entire request process — with portal visibility into review volume, velocity, and the concerns raised before they reach Google. See how it works →